The 5-Step Process

I followed a structured research and consulting process — from raw data to delivered recommendations.

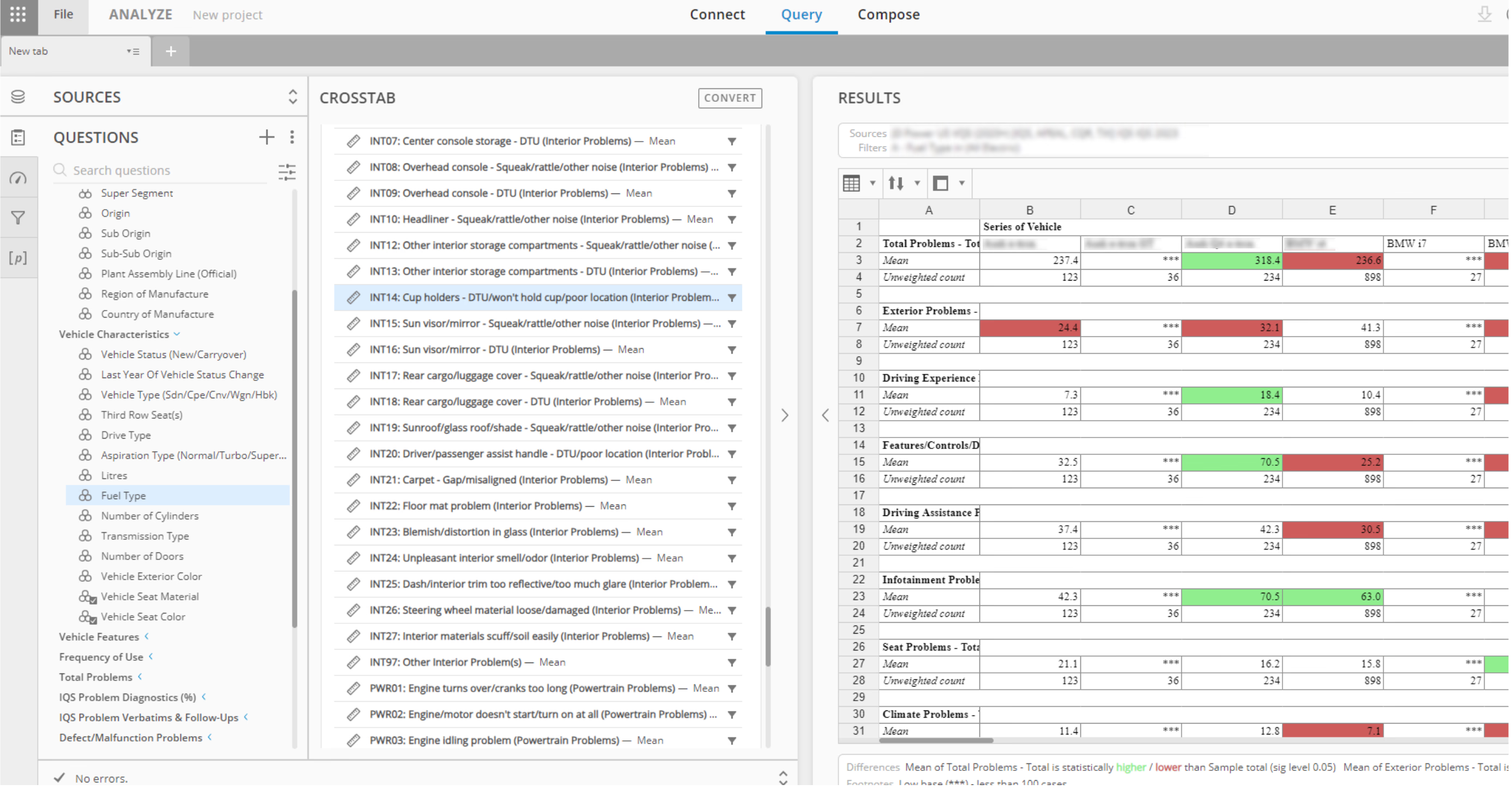

Quantifying Performance

I started by identifying where a vehicle excels or falls short against industry benchmarks. Using J.D. Power’s proprietary survey data and probability analysis tools, I could pinpoint exactly which categories — infotainment, driving assistance, interior comfort — scored below the competitive set.

Crosstab analysis: green = above benchmark, red = below. Each row is a UX category.

Understanding the Why

Numbers tell you where the problem is. Verbatims tell you why. I analyzed thousands of customer comments to find patterns — the same frustrations appearing across different owners, different models, different years.

Verbatim Analysis 2023

VR Command Recognition — 41 verbatims across 6 themes41 customer verbatims categorized into 6 themes — each theme mapped to a specific design recommendation

Evaluating Prototypes

With data and verbatims in hand, I evaluated pre-production vehicles at testing facilities. I focused on the specific problem areas the data identified — testing the actual experience against what customers reported.

Hands-on evaluation at a testing facility — every annotation maps to a data-backed issue

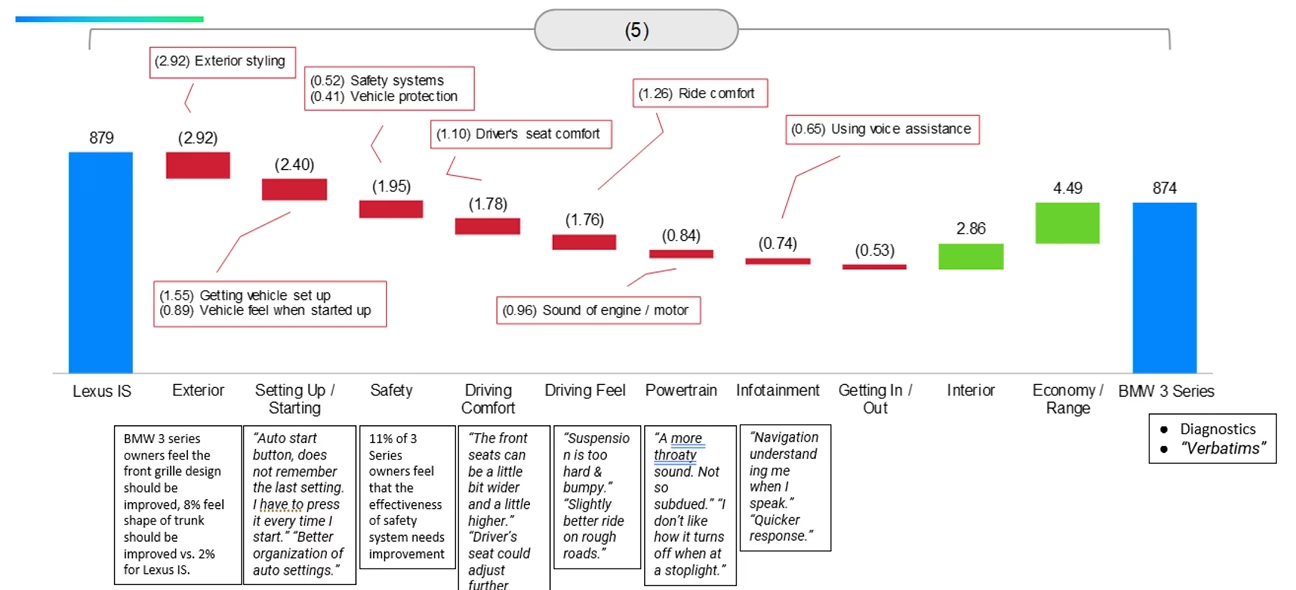

Building the Case

I combined quantitative data, verbatim themes, and evaluation findings into visual reports. Competitive benchmarks, diagnostic breakdowns, and trend analysis — all designed to make the case undeniable for the client.

Competitive analysis: Range vs. Time, IQS diagnostics, and performance benchmarks across EV models

Delivering Insights

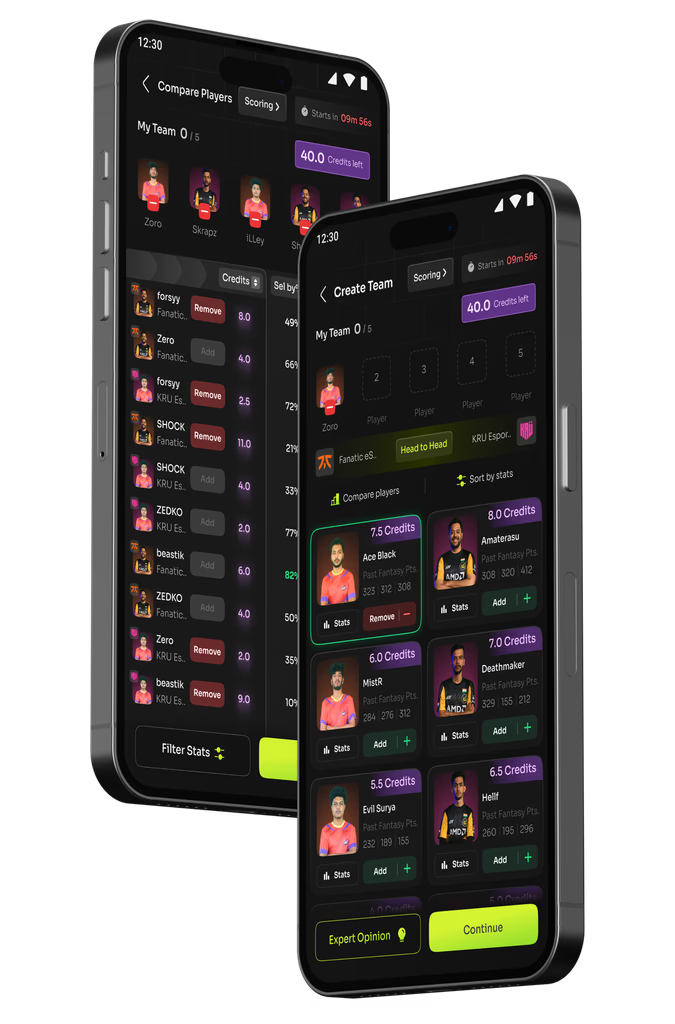

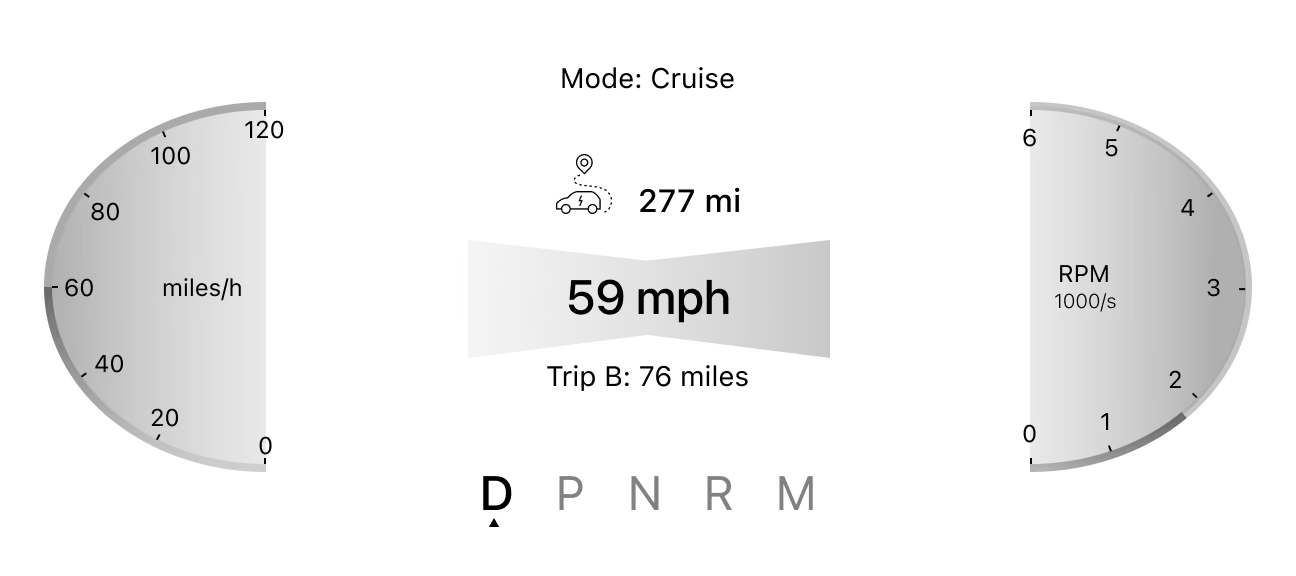

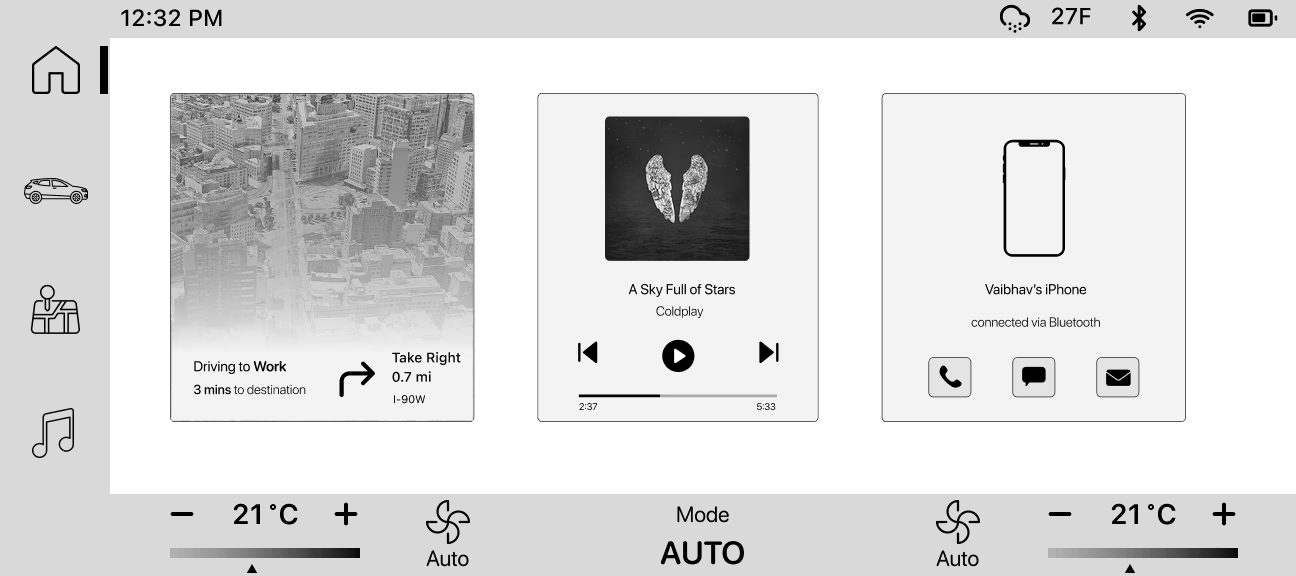

The final step was presenting findings to client teams — often directly to engineering and design leads. I delivered data-backed recommendations, demonstrated issues on prototypes, and provided wireframes for proposed improvements.

HMI wireframes: instrument cluster and infotainment redesign proposals

The deliverable: category-level diagnostic with matched verbatim quotes and competitive positioning